Nerds vs Suits

Management: “that’s good enough, crack on with it”

Nerds: “Can you just sign that you are happy doing nothing… you accept the risk.”

25/02/2026 Podcast

Management: “that’s good enough, crack on with it”

Nerds: “Can you just sign that you are happy doing nothing… you accept the risk.”

25/02/2026 Podcast

If you’ve ever tried to explain an OT security risk to a board and watched their eyes glaze over, you already know the problem. It’s not always negligence. Often, it’s translation.

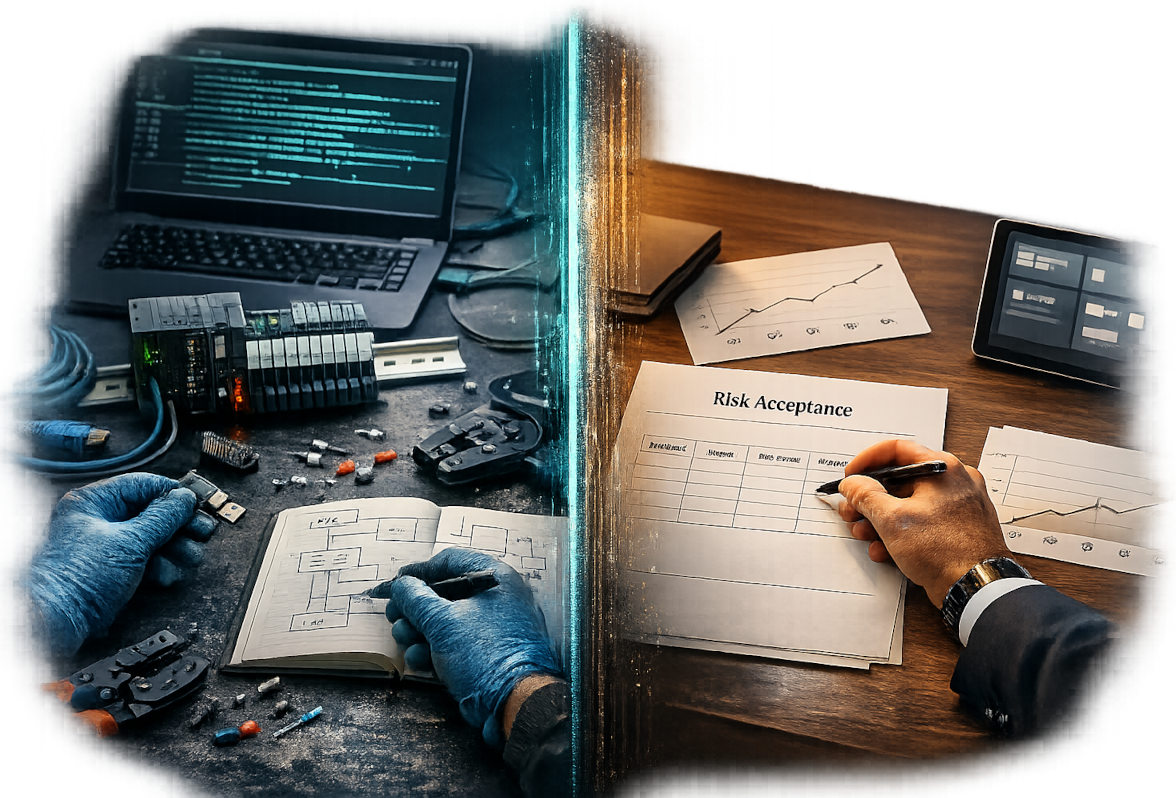

In this episode of You Gotta Hack That, Felix talks with Alex, a consultant and sales lead who sits right in the middle of two worlds. The frontline engineers who see the fragility of industrial systems up close, and the management layer that needs to ship, hit targets, and keep production moving. Alex frames it bluntly: suits versus nerds, and the phrase “good enough” is usually where the sparks start.

In IT, “we’ll patch it later” can be annoying but survivable. In OT, failure cascades. Downtime is measured in production loss, safety risk, contractual penalties, and reputational fallout. Felix points out a hard truth about safety systems too: if your safety valves and interlocks are already firing, you’re in last resort territory. Bad things are happening, the system is just trying to make them less catastrophic.

So why does funding still stall? Alex’s take is simple: management often comes up through IT, hears “factory”, “offline”, or “air gapped”, and assumes the risk is contained. Meanwhile, the OT teams are shouting in technical language that does not land.

Alex pushes a practical framing: if a £100k investment prevents a shutdown that costs £24m per day, the decision becomes easy. The issue is that many orgs never put that story in front of decision makers in a way they can act on. Risk appetite exists, but it needs numbers, consequences, and clear options.

One governance move Alex recommends is forcing explicit risk acceptance. Write down the risk, the likely impact, and the remediation, then ask the accountable person to sign that they accept doing nothing. It sounds confrontational, but it also creates focus, and a paper trail that tends to sharpen attention.

The most useful pivot in this chat is away from shiny controls and towards recovery. Alex argues that the industry talks a lot about “protect, detect, respond”, but far less about how quickly you can stand the plant back up after a hit. In OT, “switch it off and on again” is not a plan. Recovery time objectives, validated backups, and rehearsed restoration procedures are what decide whether you lose two days or a week, and whether the board gets a phone call from the press.

Felix brings in the double empathy problem, the idea that communication can fail both ways, especially across neurodivergent and non-neurodivergent styles. Alex backs it with lived reality: brilliant specialists can assume others “should just see it”, which creates friction, then silence. The fix is not dumbing down, it’s changing the packaging. Sometimes that means explaining like someone’s six, sometimes it means translating into pounds, downtime, and accountability.

Felix (00:00)

Hello, I’m Felix and welcome to You Gotta Hack That. This is the podcast all about the security behind the Internet of Things and operational technology. In this episode, I am joined by Alex. Alex, would you like to introduce yourself?

Alex (00:15)

My name is Alex Ward and I’m a consultant and sales lead. I pretty much am up to my eyeballs in OT security and cyber security overall.

Felix (00:27)

Wonderful. I’m going to go so far as to say that anything you say in this is probably your own opinion and not that of your employer and…

Alex (00:34)

And thank you very much for the safety net.

Felix (00:37)

We met at a conference a short while ago and we decided our opinions somewhat differ at times because of our experiences in life. And that seemed like a really good thing to explore to me. So tell me about what you see, because this is sort of the dichotomy, isn’t it?

Alex (00:56)

Instead of dichotomy, could we say suits versus nerds? Can we say management versus frontline OT security? Because from my view, and I’m definitely on the side, so I can see the management side of it and I deal with the frontline troops, so to speak. And the words “good enough” from management, that’s good enough, crack on with it, I know that is a red rag to a bull as far as cyber security is concerned.

Felix (00:59)

Indeed we can.

Alex (01:23)

I’m playing devil’s advocate for the sake of this. A podcast that just agrees on everything isn’t any fun. The cyber process always slows stuff down. Now, whether that’s IT or OT, there’s been a history of people switching off their VPN so they could just get something done. And we need to discuss that. And also risk appetite. Risk appetite cascades down and causes all sorts of issues because the management aren’t properly aware of the risk associated with failure in OT. Now I’m not saying all management are thick. What I’m saying is that they’ve come up probably through the IT world and they are not understanding why the security dudes are asking for so much money and it’s a factory, it’s offline, or “I thought we’d air gapped this”. All sorts of weird and wonderful statements that means they don’t need to fund OT security. And if someone had told them a hundred thousand pound investment can save them from the factory shutting down, costing them 24 million quid a day, they would happily give you a hundred thousand. But no one’s really explained that to them. And one of my chief worries and concerns about the future is supply chain attacks. The National Cyber Security Centre, Ofgem, Ofwat, they’ve all made statements, but I think that a lot of work has to be done to really protect us.

Felix (01:29)

Not helpful. Yes.

Felix (02:49)

Yeah.

Alex (02:50)

Anyway, that was a bit serious at the end, I apologise.

Felix (02:52)

No, I mean, it can be quite a serious topic. Ultimately, some of these operational technology services that we’re talking about present a threat to life, or they protect life in some circumstances. For them to go wrong can be quite serious.

Alex (03:05)

Yes. HR and, or the C-suite would have responsibility for human life, you know, oil rigs and that sort of thing. And so they’re very well documented and people invest a lot of money in it. If you’ve got safety processes, manual and run by process and machine, and a person dies, someone’s going to prison. Precedent has been set that if a third actor, an attack, just vandalising the system, and by accident damages a safety protocol, safety process, and somebody dies, then holy moly, that’s a terrible situation. Because it was just a nefarious attack. They didn’t target it, they were just trying to break the system and now someone’s dead. It’s terrifying.

Felix (03:50)

You’ve touched on quite a few bits there for me, Alex, because what you’re talking about is like a third party being involved in a service shutting down or whatever, and then bad things occur. Proving that the third party was actually involved in that is often very difficult. Proving that their actions actually led to that circumstance is very difficult. But also, I mean, a lot of people turn and say, “Well, yeah, but we’ve got loads of safety features built in.” And I’m like, “Well, yes, but those safety features are for some circumstances, it’s not covering all circumstances.” Like those safety valves, you know, if it gets to the point where the safety systems, such as a valve, are kicking in, they are the moment of last resort and probably bad things are still going to happen. It just will be less bad, if you see what I mean.

Alex (04:38)

We have a cyber range. That cyber range, our apprentices flip switches, whether it’s a PLC on a National Grid. And so we can show what can be done if you know what you’re doing. And we know the processes in which to protect it. And also the grid is one of the best protected things in this country. CNI is serious, the National Cyber Security Centre overview. But it still goes back to what message is coming up from the cyber dudes to the management saying, “This is the risk.” Sometimes the language is different. Although I’m in cyber security, some of the super subject matter experts within Thales, I mean, I can listen to each word, I understand it’s constructing a sentence, and then finally a paragraph, and I’ve understood one word they’ve said, because it is so specific to that problem. And you can’t expect that to translate through two layers of management and turn it into some funding for more firewalls or widgets or whatever these cyber dudes want. So I think that, like all great relationships, it’s a two-way thing. And I think management needs to bring in the cyber to say, “Can we now talk about risk and try and put some numbers by it.” There are some very clever formulae about risk. I mean, if there are no attacks, what are the risks? If there are no insider threats, what are the risks? And as you ramp up, well actually, a bad process could switch that off. And you slowly build up your risk tolerance and you find out how to reduce that. It’s just a process. We do that with C-suite all the time, as do a lot of other consultancies. So there is a proper discussion, but it just means that the management have got to say, “Actually, I’m interested in this,” and the techies have got to say, “Look, I need to get this message up there because right now we are exposed.”

Felix (06:37)

I think this can be an incredibly frustrating process to go through. I regularly find myself and people I’ve worked with in the past talk about the fact that they’ve been shouting about a particular problem for what feels like ages and nobody ever takes it seriously, or the opposite decisions are made. From my own experience of this, you believe you’ve explained the situation clearly. You’ve kind of portrayed what the possible outcome could be and you’ve given the options, you’ve gone through the process that you’re asked to do, whether it’s produced as a white paper or an options paper or something. And then suddenly you end up in this situation where it seems like it’s being ignored. That’s quite disheartening. I hear lots of people talk about this.

Alex (07:19)

But it goes back to communication because the way, and I’ll say this broadly, the way you, Felix, communicate isn’t the way an accountant would communicate. It isn’t the way a sports star would communicate. So for example, if I may be so bold, if you were working for a big company and you were in charge of your OT estate and you decided, you know what, this is your risk. One of the methodologies to get people’s attention and for them to make the right decision, yes or no, is to get them to sign it off. So you say, “Look, this is the risk that I believe. These are the consequences that I believe. This is how we fix it, or whatever you want to do. Can you just sign that you are happy doing nothing. Just say that you accept the risk.” And as soon as someone is asked to sign something, they get focused on it. You’ve got to admit it’s a good idea. Come on, admit it is a good idea.

Felix (08:07)

Yeah. I support that in many ways and I think in some circumstances that would absolutely work. But I think there are quite a lot of situations where people have signed off on risk, but then when push comes to shove, they go, “Well, I didn’t really understand it.” People are put up in front of a jury of some description or in front of Parliament or whatever and they turn around and go, “Yes, but we did some stuff and things and I was told that it was going to be okay.” And when you actually boil it down, they probably spent about 15 minutes on it once a month thinking about those particular problem sets because they are difficult. They’re subtle problems where subtle problems have major impact, and you make major changes to the situation.

Alex (08:56)

Perseverance, I’m afraid, is the only thing that we can talk about because you’re talking about the cyber guy talking to the board guy and putting it in words rather than in technical terms. Put it in money terms. The risk of this is one million quid. To offset that risk, it’s one hundred thousand pounds. Your ROI is that that bad thing has got a 90% chance of never happening, so nine hundred thousand. That’s your return on your investment.

Felix (09:22)

Why is it so common then for organisations to, once they’ve had a breach, to suddenly spend an awful lot of money on this sort of thing? You know, after it’s too late, even though the people who’ve been talking about it have been saying these are the problems. I mean, is it purely, do you think, down to that communication again, that it just failed in the first place and now there’s a very stark example? The communication is not necessarily any different, but someone’s paying a bit more attention.

Alex (09:46)

There’ll be lots of soul searching. The techie guys have done everything in their power to tell. And the CEO has actually said, “Look, do you know what? I’ve seen all your forms. I’ve signed off because I cannot spend the half a million quid you’re asking for. Goodbye.” And six months later, it happens. And then they realise, gosh, now it’s a million quid to fix the problem. Most of our customers have done one. And I’ve got the Daily Mail knocking on my door. You know, the reputational damage is, this is a five-year return where we were because we’d be knocked back. So yes, unfortunately, because they think they’re going to get away with it until they don’t. Is that human nature?

Felix (10:12)

Yeah.

Alex (10:16)

Yes.

Felix (10:22)

In 2026, the You Gotta Hack That team has two training courses. On March the 2nd, we start this year’s PCB and electronics reverse engineering course. We get hands-on with an embedded device and expose all of its hardware secrets, covering topics like defeating defensive PCB design, chip-to-chip communications, chip-off attacks, and the reverse engineering process. On June the 8th, we launch the unusual radio frequency penetration testing course.

We dig into practical RF skills so that you can take a target signal and perform attacks against it in a safe and useful way. Both courses are a week long. They are a deep dive, they’re nerdy, and we provide everything you need other than your enthusiasm. As the unusual RF penetration testing course is brand new, you can be one of our beta testers and get £1,000 off. There’s more information available on our website at https://yougottahackthat.com/courses. We recommend booking straight away as we have to limit the spaces to ensure the best learning experience.

But for now, let’s get back to today’s topic.

There are a lot of people out there who would happily claim that something is secure or uses bank grade encryption or that kind of stuff. And I cringe every time I hear this because it’s horrific. I mean, there is no such thing, in my opinion, as a secure system. There are grades of…

Alex (11:45)

Because you’ve the insider threats. You’ve given them all the usernames and passwords.

Felix (11:49)

Yeah. I’ve been, there’s so many different issues with this, right?

Alex (11:54)

One thing that I find that the industry doesn’t, and I mean the cyber security industry doesn’t perhaps give as much airtime to as it should, is the recovery. So it’s called business continuity. And so every, if a BCP consultant now would be listening, we talk about business continuity, everyone talks about business continuity. But there’s a difference between talking about it and actually putting it in place. If you go into some of the shows that we went to, it’s “protect, detect, respond” is often there. But if you get knocked on your arse, and let’s say there’s a high opportunity that it will happen in the next three years, how quickly can you get back up on your feet? Switching it off and switching on again isn’t going to work because it’s a factory, lads. It doesn’t work that way. I’m beginning to steer a lot of my conversations with the customers I’m talking to. Look, here’s the process and here’s IEC 62443 and all that stuff, but can I please, can we look at what budget’s available for, imagine in the worst, ransomware, how quickly can you respond? Because it’s not that you get knocked down, it’s how quickly can you get back up again. And I think if people start considering what would be needed after a big attack, the funding might start shifting as well. Do you really need all those firewalls? Because if they’re going to get breached because there’s a known back door or whatever, all the big ones have announced that we found something, it does happen, of course, IBM, everybody. The response to it is almost as important as the defence. So you need to spend enough money to show that you’re a victim if you do get hit, but you haven’t left the back door open because you’re negligent. But then you also, as the IT guys or the OT guys, need to be able to say, “Well, we got whacked. It’s going to take us two days to get up and running,” rather than a week. That’s the point. And I think we need to discuss that a little bit more.

Felix (13:28)

It does happen, right?

Alex (13:52)

Rather than a week. That’s the point. And I think we need to discuss that a little bit more.

Felix (13:57)

Absolutely. I mean, a lot of these topics you could break down into whole episodes in their own right, to be honest. Because each of these, they’re all interlinked. They’re all kind of absolutely part of the same broad set of problems. Maybe we have several episodes about this, Alex. We’ll see how we get off.

Alex (14:15)

We could, we could, but we need to do it over lunch with wine and beer. Just think how productive we would be.

Felix (14:20)

Yes, yes. Yes, I don’t know about you, but I like to go to sleep if I have too big a lunch and too much wine. But anyway, I think it’s really interesting what you’re saying about the recovery times, because this plays into something that I’ve observed recently, which comes to mind, which is what we’ve been talking about in the kind of IT world, is the kind of model of assume breach. You know, like assume there is a problem, someone’s already in here, they’re already doing stuff. How do you detect it? How do you eradicate it? How do you recover and make it like impossible to redo the same problem? And that’s a really difficult problem set, right? If you’re in that position, that’s squeaky bum time. I don’t think I’ve seen it at all in the OT world. I’m not sure that’s a thing that we really talk about in the same manner. People assume that the air gap has worked and that the OT systems are, you know, too bespoke, too complicated to whatever, and therefore nobody’s actually breached them yet. Well, I’m not sure that’s going to be true.

Alex (15:26)

I’ve got a couple of stories.

Felix (15:28)

We like a good story.

Alex (15:30)

But since this is a public thing, I will, I’m going to redact an awful lot of statements. Shall we just say, let’s pretend that some nefarious characters, and it’s like an episode out of Billions if you’ve ever seen that. Let’s say that if you put a GPS tracker on a mining company, and all the mines dig up stuff, dump it into a lorry and the lorry moves for the raw materials, and that GPS tracker over time, you could then look back and say, “Well, hang on a second, those lorries are very busy,” which means a lot of raw materials six months later, whatever it is, copper, gold, iron ore, pop out. And there’s a lot of it, which means the price will go down. But if those big dump lorries, or whatever they are, slow down, you’re going to have less of it in the market, which means the prices are going to go up. Once you have, so let’s say 12 months with the data, you can get good at fixing the betting on the price, whatever commodity.

Felix (16:27)

Cool.

Alex (16:28)

Now, that’s pure OT. There is a digger, there is a man with a digger, there is a truck driver, and it goes into a hopper. Very little IT. But it’s social engineering, isn’t it?

Felix (16:42)

Yeah. Well, there’s elements of all of it there, isn’t there. There’s the nerdy bit, there’s the social bit, there’s all sorts. There’s about understanding the mechanics and how to make that monetised as, yeah, there’s huge amounts to that.

Alex (16:55)

Yeah, yeah, and it’s our job to spot that. I mean, why is GPS data leaving the network? How did it leave the network? And those sort of things. So you need to do a little bit of digging around. But yeah, that goes on. And we need to come up with answers for that as well.

Felix (17:11)

Well, yes, quite. I guess if you’re making the assumption that that has happened at your organisation, well, how do you tackle that? You know, how do you even prove that it has or has not happened? Proving a negative is invariably quite difficult, as science has proven over many, many years. I think a lot of this comes down to communication, like we identified fairly early on in this conversation. I think that the whole “nerds can’t talk very well and suits don’t understand it” is kind of the thing. Have you ever heard of the double empathy problem? No. OK. I mean, this actually applies to neurodiversity, which, in my opinion, is fairly prevalent in cyber security.

Alex (17:50)

Yes, let’s say amen for that.

Felix (17:52)

Yeah. Well, if you assume for a moment that some of the other people in that space are in fact neurodivergent, the double empathy problem is where you can prove that one party is communicating and they believe they’re doing a pretty good job of it. The other party is not receiving and not understanding, but their communication in the opposite direction is also not actually what the person receiving it needs to be. So both directions are failing to communicate in both directions, if that makes sense.

So the double empathy problem has been proven by science. There have been studies that show that two people with neurodiversity communicate brilliantly. Two people without neurodiversity communicate brilliantly. You cross those paths and it falls to bits.

Alex (18:34)

I’ve got many, many experiences of that. The most prevalent one was one of my colleagues, a super clever dude, didn’t understand why no one else could see what he could see. So when you asked him a question about the problem, there would be anger. “What do you mean? Why are you asking these questions? It’s obvious.” Well, it is to him. It is not to me. And because he goes cross at you, you’re like, “What else can I ask?” and you just move away. And it has begun from that. So over time, you understand a little bit more about human beings. And you just go, “Look, I’m really sorry. I’m not reading your mind, but this is important. I need to leave this conversation understanding what you…” and now can you just tell it to me like I’m a six-year-old? And then that helps, you know, because you’ve admitted you’re stupid. You’ve admitted that you can’t read his mind and then, quietly, they go, “Oh, OK.” Yeah. “He’s wearing a suit on this call, I’m in my Def Leppard black T-shirt that I haven’t washed for a week.” So this is communication, but it’s when you recognise that that communication is bad, then you can fix it. In the olden days, I am older than you, Felix, so I can say back in the day, the way that would transpire in its brutal sense would be, “Well, you can’t put so-and-so in front of the customer. Never, never, ever put that bloke in front of the customer because he’s going to be rude and call the customer stupid.” However, you then get someone else to chat to them and understand it. And then it’s me and the pre-sales engineer that would be making that pitch, or that chat, because I’m charming.

Felix (19:51)

No comment.

Alex (20:24)

So I think you’re right. I think cyber, there are more neurodivergent people in and about and I think that should be applauded, celebrated and, quite frankly, made use of, without that, people like me would never stand a chance.

Felix (20:38)

Absolutely.

I think that’s a pretty good intro to our new, hopefully mini series of nerds versus suits, as you called it earlier. Let’s make that happen. Thank you everyone for listening. I hope you have enjoyed the show. Your reviews are really important to us. So if you haven’t already, give us a five star rating for this episode and for the podcast as a whole. And obviously you need to recommend us to all of your friends, your family, your grandmother, everybody, because we want everyone to see, hear, not just the nerds and the suits.